Recently, two studies from SIST Professor Ha Yajun’s group in the Reconfigurable and Intelligent Computing Lab were accepted by the ACM/IEEE Design Automation Conference (DAC). The studies were entitled TAIT: One-Shot Full-Integer Lightweight DNN Quantization via Tunable Activation Imbalance Transfer, and Bitwidth-Optimized Energy-Efficient FFT Design via Scaling Information Propagation”. The ACM/IEEE DAC conference is the most authoritative international conference in the field of electronic design automation (EDA) and embedded systems, with a focus on new tools and methods in chip, circuit, and system design. The 2021 DAC conference will be held in San Francisco, CA from December 5th to 9th, attracting scientists, researchers and industrialists to exchange ideas and provide inspiration for future research.

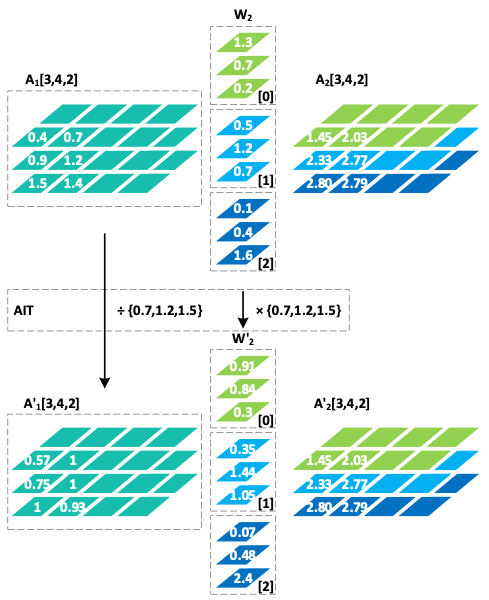

The first work is about lightweight neural networks. In recent years, lightweight neural networks have been widely used in edge computing, and typical application scenarios are autonomous driving, object detection in drones, intelligent security and face recognition (Figure 1). By decomposing the traditional convolution into depth-wise convolution and pixelwise convolution, lightweight neural networks greatly reduce the number of parameters and computations. However, there is a fairly obvious accuracy loss when combined with traditional quantization algorithms. Ha’s group found that the large difference between the output feature maps of depth-wise convolution layers is an important factor causing the accuracy loss. To improve the accuracy, they first analyzed the sources of quantization error of neural networks and found that the balance of the tensor to be quantized can be used as a predictor of its quantization error. Then, they proposed the Tunable Activation Imbalance Transfer (TAIT) algorithm, which is shown in Figure 2. The algorithm partially transfers the inter-channel difference of the feature map to the weights of the later layer, which greatly reduces the quantization error of the feature map without significantly increasing the quantization error of the weights of the later layer. Experimental results showed that this method achieved excellent results on the MobileNetv2 neural network and lossless results on the SkyNet neural network.

Figure 1. Lightweight neural networks are widely used in edge computing scenarios

Figure 2. An example for activation imbalance transfer

Second year PhD candidate Jiang Weixiong was the first author and Professor Ha Yajun was the corresponding author. This idea was first proposed in May 2020 and was submitted in the 2020 DAC-System Design Contest (DAC-SDC’20), leading them to win second place.

The second work is about Fast Fourier Transform (FFT), one of the most important algorithms in digital signal processing (DSP). FFT is widely used in DSP to help transform between the time domain and the frequency domain. Ha’s group found that some application scenarios of FFT (such as compression-decompression scenarios, or transmit-receive scenarios) have the characteristics of FFT and inverse FFT appearing in pairs, so that it is possible to adopt a certain way to achieve the transfer of complexity and calculation. They noticed that for the fixed-point VLSI implementation, the increase in dynamic range inevitably occurs in each stage of FFT operation, and proposed an effective scaling method called Scaling Information Propagation (SIP) to alleviate the problem of dynamic range growth. This method makes full use of the bit width and consumes much less additional area than the latest solutions.

Figure 3. The system diagram of FFT/IFFT

Ha’s group implemented the VLSI architecture of FFT in Orthogonal Frequency Division Multiplexing (OFDM) and Holographic Video Compression (HVC) systems to verify the SIP method, and they got better results with lower energy consumption and smaller size compared with the latest technology.

First year PhD candidate Liu Xinzhe and Third year PhD candidate Chen Fupeng were the co-first authors, and Professor Ha Yajun Ha was the corresponding author.

沪公网安备 31011502006855号

沪公网安备 31011502006855号